Today we will explain the basic conceptual knowledge of python. Many friends who are new to python have many questions. What is a python crawler? Then why is python called a crawler?

What is a python crawler?

Before entering the article, we first need to know what a crawler is. A crawler, that is, a web crawler, can be understood as a spider crawling on the Internet. The Internet is like a big web, and a crawler is a spider crawling around on this web. If it encounters its prey ( resources required), then it will grab it. For example, if it is crawling a web page, and it finds a path in this web, which is actually a hyperlink pointing to the web page, then it can crawl to another web page to obtain data. If it is not easy to understand, you can actually understand it through the following picture:

Because of the scripting characteristics of python, python is easy to configure. The processing of characters is also very flexible, and python has a rich network crawling module, so the two are often linked together. Python crawler development engineers start from a certain page of the website (usually the home page), read the content of the web page, find other link addresses in the web page, and then use these link addresses to find the next web page. This cycle continues until this Until all web pages of the website have been crawled. If the entire Internet is regarded as a website, then web spiders can use this principle to crawl all web pages on the Internet.

Crawlers can crawl the content of a website or application and extract useful value. You can also simulate user operations on browsers or App applications to implement automated procedures. The following behaviors can be achieved with crawlers:

vote-grabbing artifact

voting artifact

Prediction (stock market prediction , box office prediction)

National Sentiment Analysis

Social Relationship Network

As mentioned above, we can think that

crawlers generally refer to the crawling of network resources, and because Python's scripting features are not only easy to configure, but also very flexible in character processing. In addition, Python has rich web crawling modules, so the two are often linked together. This is why python is called a crawler.

Why is python called a crawler? As a programming language, Python is pure free software. It is deeply loved by programmers for its concise and clear syntax and forced use of whitespace characters for statement indentation. . To give an example: to complete a task, a total of 1,000 lines of code need to be written in C language, 100 lines of code in Java, and only 20 lines of code in Python. If you use Python to complete programming tasks, you will write less code, and the code will be concise, short, and more readable. When a team is developing, it will be faster to read other people's code, and the development efficiency will be higher, making the work more efficient.

This is a programming language that is very suitable for developing web crawlers, and compared to other static programming languages, Python’s interface for grabbing web documents is simpler; compared to other dynamic script languages, Python’s urllib2 package Provides a relatively complete API for accessing web documents. In addition, there are excellent third-party packages in python that can efficiently implement web page crawling, and can complete the tag filtering function of web pages with very short codes.

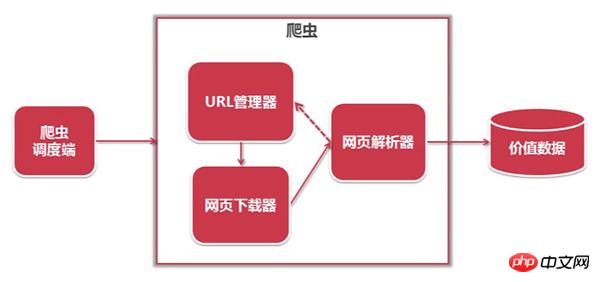

The structure of the python crawler is as follows:

##1. URL manager: manages the URLs to be crawled Collection and crawled URL collection, send the URL to be crawled to the web page downloader;

##1. URL manager: manages the URLs to be crawled Collection and crawled URL collection, send the URL to be crawled to the web page downloader;

#2. Web page downloader: crawl the web page corresponding to the URL and store it as a string. Send it to the web page parser;

3. Web page parser: parse out valuable data, store it, and add the url to the URL manager.

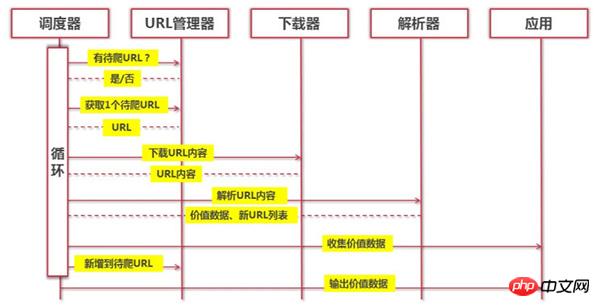

The workflow of python is as follows:

(The Python crawler determines whether there is a URL to be crawled through the URL manager. If there is a URL to be crawled, it is passed to the downloader through the scheduler, the URL content is downloaded, and sent to the parser through the scheduler, the URL content is parsed, and the value data and new URL list are passed to the application through the scheduler, and the value is output Information process.)

(The Python crawler determines whether there is a URL to be crawled through the URL manager. If there is a URL to be crawled, it is passed to the downloader through the scheduler, the URL content is downloaded, and sent to the parser through the scheduler, the URL content is parsed, and the value data and new URL list are passed to the application through the scheduler, and the value is output Information process.)

Python is a programming language that is very suitable for developing web crawlers. It provides modules such as urllib, re, json, pyquery, etc., and it also has many established frameworks, such as Scrapy framework, PySpider crawler system, etc., which itself is very It is simple and convenient, so it is the preferred programming language for web crawlers! I hope this article can provide some help to friends who have just come into contact with the python language.

The above is the detailed content of What is a python crawler? Why is python called a crawler?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

How to handle API authentication in Python

Jul 13, 2025 am 02:22 AM

How to handle API authentication in Python

Jul 13, 2025 am 02:22 AM

The key to dealing with API authentication is to understand and use the authentication method correctly. 1. APIKey is the simplest authentication method, usually placed in the request header or URL parameters; 2. BasicAuth uses username and password for Base64 encoding transmission, which is suitable for internal systems; 3. OAuth2 needs to obtain the token first through client_id and client_secret, and then bring the BearerToken in the request header; 4. In order to deal with the token expiration, the token management class can be encapsulated and automatically refreshed the token; in short, selecting the appropriate method according to the document and safely storing the key information is the key.

Explain Python assertions.

Jul 07, 2025 am 12:14 AM

Explain Python assertions.

Jul 07, 2025 am 12:14 AM

Assert is an assertion tool used in Python for debugging, and throws an AssertionError when the condition is not met. Its syntax is assert condition plus optional error information, which is suitable for internal logic verification such as parameter checking, status confirmation, etc., but cannot be used for security or user input checking, and should be used in conjunction with clear prompt information. It is only available for auxiliary debugging in the development stage rather than substituting exception handling.

How to iterate over two lists at once Python

Jul 09, 2025 am 01:13 AM

How to iterate over two lists at once Python

Jul 09, 2025 am 01:13 AM

A common method to traverse two lists simultaneously in Python is to use the zip() function, which will pair multiple lists in order and be the shortest; if the list length is inconsistent, you can use itertools.zip_longest() to be the longest and fill in the missing values; combined with enumerate(), you can get the index at the same time. 1.zip() is concise and practical, suitable for paired data iteration; 2.zip_longest() can fill in the default value when dealing with inconsistent lengths; 3.enumerate(zip()) can obtain indexes during traversal, meeting the needs of a variety of complex scenarios.

What are Python type hints?

Jul 07, 2025 am 02:55 AM

What are Python type hints?

Jul 07, 2025 am 02:55 AM

TypehintsinPythonsolvetheproblemofambiguityandpotentialbugsindynamicallytypedcodebyallowingdeveloperstospecifyexpectedtypes.Theyenhancereadability,enableearlybugdetection,andimprovetoolingsupport.Typehintsareaddedusingacolon(:)forvariablesandparamete

What are python iterators?

Jul 08, 2025 am 02:56 AM

What are python iterators?

Jul 08, 2025 am 02:56 AM

InPython,iteratorsareobjectsthatallowloopingthroughcollectionsbyimplementing__iter__()and__next__().1)Iteratorsworkviatheiteratorprotocol,using__iter__()toreturntheiteratorand__next__()toretrievethenextitemuntilStopIterationisraised.2)Aniterable(like

Python FastAPI tutorial

Jul 12, 2025 am 02:42 AM

Python FastAPI tutorial

Jul 12, 2025 am 02:42 AM

To create modern and efficient APIs using Python, FastAPI is recommended; it is based on standard Python type prompts and can automatically generate documents, with excellent performance. After installing FastAPI and ASGI server uvicorn, you can write interface code. By defining routes, writing processing functions, and returning data, APIs can be quickly built. FastAPI supports a variety of HTTP methods and provides automatically generated SwaggerUI and ReDoc documentation systems. URL parameters can be captured through path definition, while query parameters can be implemented by setting default values ??for function parameters. The rational use of Pydantic models can help improve development efficiency and accuracy.

How to test an API with Python

Jul 12, 2025 am 02:47 AM

How to test an API with Python

Jul 12, 2025 am 02:47 AM

To test the API, you need to use Python's Requests library. The steps are to install the library, send requests, verify responses, set timeouts and retry. First, install the library through pipinstallrequests; then use requests.get() or requests.post() and other methods to send GET or POST requests; then check response.status_code and response.json() to ensure that the return result is in compliance with expectations; finally, add timeout parameters to set the timeout time, and combine the retrying library to achieve automatic retry to enhance stability.

Python variable scope in functions

Jul 12, 2025 am 02:49 AM

Python variable scope in functions

Jul 12, 2025 am 02:49 AM

In Python, variables defined inside a function are local variables and are only valid within the function; externally defined are global variables that can be read anywhere. 1. Local variables are destroyed as the function is executed; 2. The function can access global variables but cannot be modified directly, so the global keyword is required; 3. If you want to modify outer function variables in nested functions, you need to use the nonlocal keyword; 4. Variables with the same name do not affect each other in different scopes; 5. Global must be declared when modifying global variables, otherwise UnboundLocalError error will be raised. Understanding these rules helps avoid bugs and write more reliable functions.