GPT-4o and LangGraph Tutorial: Build a TNT-LLM Application

Mar 05, 2025 am 10:56 AMMicrosoft's TNT-LLM: Revolutionizing Taxonomy Generation and Text Classification

Microsoft has unveiled TNT-LLM, a groundbreaking system automating taxonomy creation and text classification, surpassing traditional methods in both speed and accuracy. This innovative approach leverages the power of large language models (LLMs) to streamline and scale the generation of taxonomies and classifiers, minimizing manual intervention. This is particularly beneficial for applications like Bing Copilot, where managing dynamic and diverse textual data is paramount.

This article demonstrates TNT-LLM's implementation using GPT-4o and LangGraph for efficient news article clustering. For further information on GPT-4o and LangGraph, consult these resources:

- What Is OpenAI's GPT-4o?

- GPT-4o API Tutorial: Getting Started with OpenAI's API

- LangGraph Tutorial: What Is LangGraph and How to Use It?

The original TnT-LLM research paper, "TnT-LLM: Text Mining at Scale with Large Language Models," provides comprehensive details on the system.

Understanding TNT-LLM

TNT-LLM (Taxonomy and Text Classification using Large Language Models) is a two-stage framework designed for generating and classifying taxonomies from textual data.

Phase 1: Taxonomy Generation

This initial phase utilizes a sample of text documents and a specific instruction (e.g., "generate a taxonomy to cluster news articles"). An LLM summarizes each document, extracting key information. Through iterative refinement, the LLM builds, modifies, and refines the taxonomy, resulting in a structured hierarchy of labels and descriptions for effective news article categorization.

Source: Mengting Wan et al.

Phase 2: Text Classification

The second phase employs the generated taxonomy to label a larger dataset. The LLM applies these labels, creating training data for a lightweight classifier (like logistic regression). This trained classifier efficiently labels the entire dataset or performs real-time classification.

Source: Mengting Wan et al.

TNT-LLM's adaptable nature makes it suitable for various text classification tasks, including intent detection and topic categorization.

Advantages of TNT-LLM

TNT-LLM offers significant advantages for large-scale text mining and classification:

- Automated Taxonomy Generation: Automates the creation of detailed and interpretable taxonomies from raw text, eliminating the need for extensive manual effort and domain expertise.

- Scalable Classification: Enables scalable text classification using lightweight models that handle large datasets and real-time classification efficiently.

- Cost-Effectiveness: Optimizes resource usage through tiered LLM utilization (e.g., GPT-4 for taxonomy generation, GPT-3.5-Turbo for summarization, and logistic regression for final classification).

- High-Quality Outputs: Iterative taxonomy generation ensures high-quality, relevant, and accurate categorizations.

- Minimal Human Intervention: Reduces manual input, minimizing potential biases and inconsistencies.

- Flexibility: Adapts to diverse text classification tasks and domains, supporting integration with various LLMs, embedding methods, and classifiers.

Implementing TNT-LLM

A step-by-step implementation guide follows:

Installation:

Install necessary packages:

pip install langgraph langchain langchain_openai

Set environment variables for API keys and model names:

export AZURE_OPENAI_API_KEY='your_api_key_here' export AZURE_OPENAI_MODEL='your_deployment_name_here' export AZURE_OPENAI_ENDPOINT='deployment_endpoint'

Core Concepts:

-

Documents: Raw text data (articles, chat logs) structured using the

Docclass. -

Taxonomies: Clusters of categorized intents or topics, managed by the

TaxonomyGenerationStateclass.

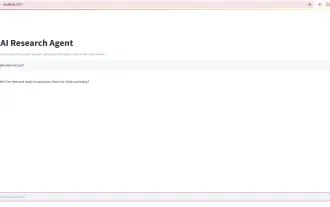

Building a Simple TNT-LLM Application:

The following sections detail the implementation steps, using code snippets to illustrate key processes. Due to the length of the original code, a complete reproduction here is impractical. However, the following provides a structured overview of the process:

-

Step 0: Define Graph State Class, Load Datasets, and Initialize GPT-4o: This involves defining the data structures and loading the news articles dataset. A GPT-4o model is initialized for use throughout the pipeline.

-

Step 1: Summarize Documents: Each document is summarized using an LLM prompt.

-

Step 2: Create Minibatches: Summarized documents are divided into minibatches for parallel processing.

-

Step 3: Generate Initial Taxonomy: An initial taxonomy is generated from the first minibatch.

-

Step 4: Update Taxonomy: The taxonomy is iteratively updated as subsequent minibatches are processed.

-

Step 5: Review Taxonomy: The final taxonomy is reviewed for accuracy and relevance.

-

Step 6: Orchestrating the TNT-LLM Pipeline with StateGraph: A StateGraph orchestrates the execution of the various steps.

-

Step 7: Clustering and Displaying TNT-LLM's News Article Taxonomy: The final taxonomy is displayed, showing the clusters of news articles.

Conclusion

TNT-LLM offers a powerful and efficient solution for large-scale text mining and classification. Its automation capabilities significantly reduce the time and resources required for analyzing unstructured text data, enabling data-driven decision-making across various domains. The potential for further development and application across industries is substantial. For those interested in further LLM application development, a course on "Developing LLM Applications with LangChain" is recommended.

The above is the detailed content of GPT-4o and LangGraph Tutorial: Build a TNT-LLM Application. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

From Adoption To Advantage: 10 Trends Shaping Enterprise LLMs In 2025

Jun 20, 2025 am 11:13 AM

From Adoption To Advantage: 10 Trends Shaping Enterprise LLMs In 2025

Jun 20, 2025 am 11:13 AM

Here are ten compelling trends reshaping the enterprise AI landscape.Rising Financial Commitment to LLMsOrganizations are significantly increasing their investments in LLMs, with 72% expecting their spending to rise this year. Currently, nearly 40% a

AI Investor Stuck At A Standstill? 3 Strategic Paths To Buy, Build, Or Partner With AI Vendors

Jul 02, 2025 am 11:13 AM

AI Investor Stuck At A Standstill? 3 Strategic Paths To Buy, Build, Or Partner With AI Vendors

Jul 02, 2025 am 11:13 AM

Investing is booming, but capital alone isn’t enough. With valuations rising and distinctiveness fading, investors in AI-focused venture funds must make a key decision: Buy, build, or partner to gain an edge? Here’s how to evaluate each option—and pr

The Unstoppable Growth Of Generative AI (AI Outlook Part 1)

Jun 21, 2025 am 11:11 AM

The Unstoppable Growth Of Generative AI (AI Outlook Part 1)

Jun 21, 2025 am 11:11 AM

Disclosure: My company, Tirias Research, has consulted for IBM, Nvidia, and other companies mentioned in this article.Growth driversThe surge in generative AI adoption was more dramatic than even the most optimistic projections could predict. Then, a

New Gallup Report: AI Culture Readiness Demands New Mindsets

Jun 19, 2025 am 11:16 AM

New Gallup Report: AI Culture Readiness Demands New Mindsets

Jun 19, 2025 am 11:16 AM

The gap between widespread adoption and emotional preparedness reveals something essential about how humans are engaging with their growing array of digital companions. We are entering a phase of coexistence where algorithms weave into our daily live

These Startups Are Helping Businesses Show Up In AI Search Summaries

Jun 20, 2025 am 11:16 AM

These Startups Are Helping Businesses Show Up In AI Search Summaries

Jun 20, 2025 am 11:16 AM

Those days are numbered, thanks to AI. Search traffic for businesses like travel site Kayak and edtech company Chegg is declining, partly because 60% of searches on sites like Google aren’t resulting in users clicking any links, according to one stud

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). Heading Toward AGI And

Cisco Charts Its Agentic AI Journey At Cisco Live U.S. 2025

Jun 19, 2025 am 11:10 AM

Cisco Charts Its Agentic AI Journey At Cisco Live U.S. 2025

Jun 19, 2025 am 11:10 AM

Let’s take a closer look at what I found most significant — and how Cisco might build upon its current efforts to further realize its ambitions.(Note: Cisco is an advisory client of my firm, Moor Insights & Strategy.)Focusing On Agentic AI And Cu

Build Your First LLM Application: A Beginner's Tutorial

Jun 24, 2025 am 10:13 AM

Build Your First LLM Application: A Beginner's Tutorial

Jun 24, 2025 am 10:13 AM

Have you ever tried to build your own Large Language Model (LLM) application? Ever wondered how people are making their own LLM application to increase their productivity? LLM applications have proven to be useful in every aspect