This tutorial demonstrates fine-tuning the Llama 3.1-8b-It model for mental health sentiment analysis. We'll customize the model to predict patient mental health status from text data, merge the adapter with the base model, and deploy the complete model on the Hugging Face Hub. Crucially, remember that ethical considerations are paramount when using AI in healthcare; this example is for illustrative purposes only.

We'll cover accessing Llama 3.1 models via Kaggle, using the Transformers library for inference, and the fine-tuning process itself. A prior understanding of LLM fine-tuning (see our "An Introductory Guide to Fine-Tuning LLMs") is beneficial.

Image by Author

Understanding Llama 3.1

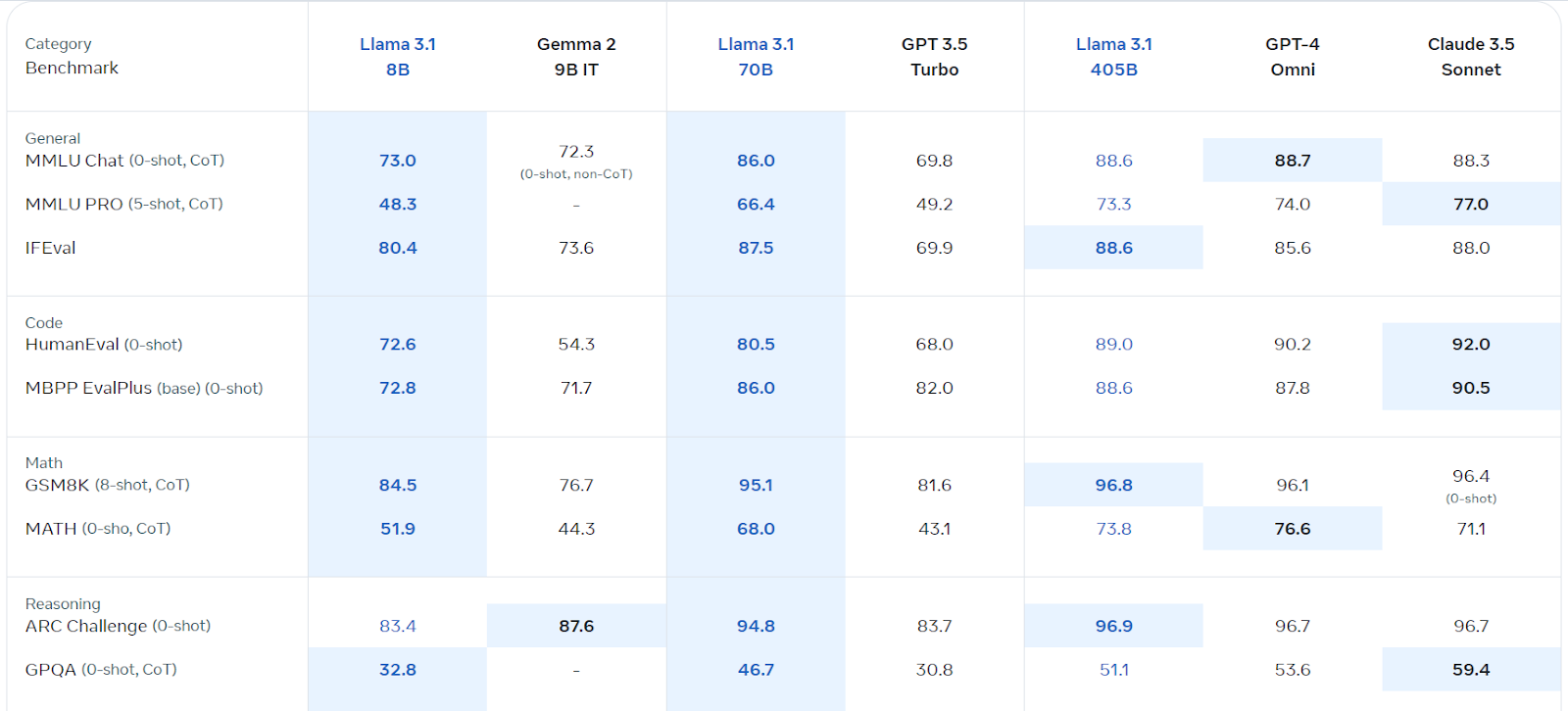

Llama 3.1, Meta AI's multilingual large language model (LLM), excels in language understanding and generation. Available in 8B, 70B, and 405B parameter versions, it's built on an auto-regressive architecture with optimized transformers. Trained on diverse public data, it supports eight languages and boasts a 128k context length. Its commercial license is readily accessible, and it outperforms several competitors in various benchmarks.

Source: Llama 3.1 (meta.com)

Accessing and Using Llama 3.1 on Kaggle

We'll leverage Kaggle's free GPUs/TPUs. Follow these steps:

- Register on meta.com (using your Kaggle email).

- Access the Llama 3.1 Kaggle repository and request model access.

- Launch a Kaggle notebook using the provided "Code" button.

- Select your preferred model version and add it to the notebook.

- Install necessary packages (

%pip install -U transformers accelerate). - Load the model and tokenizer:

from transformers import AutoTokenizer, AutoModelForCausalLM, pipeline

import torch

base_model = "/kaggle/input/llama-3.1/transformers/8b-instruct/1"

tokenizer = AutoTokenizer.from_pretrained(base_model)

model = AutoModelForCausalLM.from_pretrained(base_model, return_dict=True, low_cpu_mem_usage=True, torch_dtype=torch.float16, device_map="auto", trust_remote_code=True)

pipe = pipeline("text-generation", model=model, tokenizer=tokenizer, torch_dtype=torch.float16, device_map="auto")

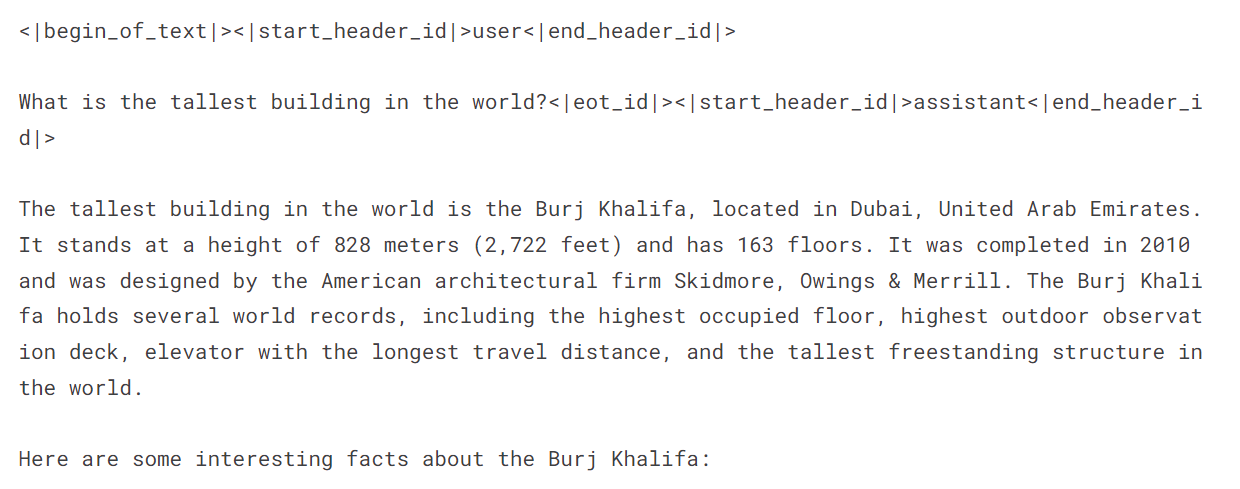

- Create prompts and run inference:

messages = [{"role": "user", "content": "What is the tallest building in the world?"}]

prompt = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

outputs = pipe(prompt, max_new_tokens=120, do_sample=True)

print(outputs[0]["generated_text"])

Fine-tuning Llama 3.1 for Mental Health Classification

-

Setup: Start a new Kaggle notebook with Llama 3.1, install required packages (

bitsandbytes,transformers,accelerate,peft,trl), and add the "Sentiment Analysis for Mental Health" dataset. Configure Weights & Biases (using your API key). -

Data Processing: Load the dataset, clean it (removing ambiguous categories: "Suicidal," "Stress," "Personality Disorder"), shuffle, and split into training, evaluation, and testing sets (using 3000 samples for efficiency). Create prompts incorporating statements and labels.

-

Model Loading: Load the Llama-3.1-8b-instruct model using 4-bit quantization for memory efficiency. Load the tokenizer and set the pad token ID.

-

Pre-Fine-tuning Evaluation: Create functions to predict labels and evaluate model performance (accuracy, classification report, confusion matrix). Assess the model's baseline performance before fine-tuning.

-

Fine-tuning: Configure LoRA using appropriate parameters. Set up training arguments (adjust as needed for your environment). Train the model using

SFTTrainer. Monitor progress using Weights & Biases. -

Post-Fine-tuning Evaluation: Re-evaluate the model's performance after fine-tuning.

-

Merging and Saving: In a new Kaggle notebook, merge the fine-tuned adapter with the base model using

PeftModel.from_pretrained()andmodel.merge_and_unload(). Test the merged model. Save and push the final model and tokenizer to the Hugging Face Hub.

Remember to replace placeholders like /kaggle/input/... with your actual file paths. The complete code and detailed explanations are available in the original, longer response. This condensed version provides a high-level overview and key code snippets. Always prioritize ethical considerations when working with sensitive data.

The above is the detailed content of Fine-Tuning Llama 3.1 for Text Classification. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Top 7 NotebookLM Alternatives

Jun 17, 2025 pm 04:32 PM

Top 7 NotebookLM Alternatives

Jun 17, 2025 pm 04:32 PM

Google’s NotebookLM is a smart AI note-taking tool powered by Gemini 2.5, which excels at summarizing documents. However, it still has limitations in tool use, like source caps, cloud dependence, and the recent “Discover” feature

From Adoption To Advantage: 10 Trends Shaping Enterprise LLMs In 2025

Jun 20, 2025 am 11:13 AM

From Adoption To Advantage: 10 Trends Shaping Enterprise LLMs In 2025

Jun 20, 2025 am 11:13 AM

Here are ten compelling trends reshaping the enterprise AI landscape.Rising Financial Commitment to LLMsOrganizations are significantly increasing their investments in LLMs, with 72% expecting their spending to rise this year. Currently, nearly 40% a

AI Investor Stuck At A Standstill? 3 Strategic Paths To Buy, Build, Or Partner With AI Vendors

Jul 02, 2025 am 11:13 AM

AI Investor Stuck At A Standstill? 3 Strategic Paths To Buy, Build, Or Partner With AI Vendors

Jul 02, 2025 am 11:13 AM

Investing is booming, but capital alone isn’t enough. With valuations rising and distinctiveness fading, investors in AI-focused venture funds must make a key decision: Buy, build, or partner to gain an edge? Here’s how to evaluate each option—and pr

The Unstoppable Growth Of Generative AI (AI Outlook Part 1)

Jun 21, 2025 am 11:11 AM

The Unstoppable Growth Of Generative AI (AI Outlook Part 1)

Jun 21, 2025 am 11:11 AM

Disclosure: My company, Tirias Research, has consulted for IBM, Nvidia, and other companies mentioned in this article.Growth driversThe surge in generative AI adoption was more dramatic than even the most optimistic projections could predict. Then, a

These Startups Are Helping Businesses Show Up In AI Search Summaries

Jun 20, 2025 am 11:16 AM

These Startups Are Helping Businesses Show Up In AI Search Summaries

Jun 20, 2025 am 11:16 AM

Those days are numbered, thanks to AI. Search traffic for businesses like travel site Kayak and edtech company Chegg is declining, partly because 60% of searches on sites like Google aren’t resulting in users clicking any links, according to one stud

New Gallup Report: AI Culture Readiness Demands New Mindsets

Jun 19, 2025 am 11:16 AM

New Gallup Report: AI Culture Readiness Demands New Mindsets

Jun 19, 2025 am 11:16 AM

The gap between widespread adoption and emotional preparedness reveals something essential about how humans are engaging with their growing array of digital companions. We are entering a phase of coexistence where algorithms weave into our daily live

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). Heading Toward AGI And

Cisco Charts Its Agentic AI Journey At Cisco Live U.S. 2025

Jun 19, 2025 am 11:10 AM

Cisco Charts Its Agentic AI Journey At Cisco Live U.S. 2025

Jun 19, 2025 am 11:10 AM

Let’s take a closer look at what I found most significant — and how Cisco might build upon its current efforts to further realize its ambitions.(Note: Cisco is an advisory client of my firm, Moor Insights & Strategy.)Focusing On Agentic AI And Cu