AdalFlow: A PyTorch Library for Streamlining LLM Task Pipelines

AdalFlow, spearheaded by Li Yin, bridges the gap between Retrieval-Augmented Generation (RAG) research and practical application. Leveraging PyTorch, it addresses the limitations of existing frameworks—either lacking real-world adaptability or being overly complex for research purposes. AdalFlow offers a unified library featuring robust string manipulation, flexible tools, diverse output formats, and model monitoring (TensorBoard integration). Its aim is to empower researchers and engineers to concentrate on prompts, datasets, evaluations, and fine-tuning, thereby accelerating AI innovation and simplifying the transition from research to production deployment.

Key Features and Benefits:

- Unified Framework: Simplifies LLM task pipelines, bridging the research-production divide.

- Broad Applicability: Suitable for AI researchers, ML engineers, developers, and organizations across various AI application development stages.

- PyTorch-Inspired Design: Minimal abstraction, strong string processing, and versatile tools for customization and fine-tuning NLP and Generative AI tasks.

- Optimized Performance: Enhanced token efficiency and performance through a unified optimization framework, supporting both zero-shot and few-shot prompt optimization.

-

Simplified Development: Core components like

AdalComponentandTrainerstreamline AI application development and deployment.

Target Audience:

AdalFlow caters to a diverse user base:

- AI Researchers: Provides a flexible, minimally abstracted tool for LLM experimentation, prompt optimization, and model fine-tuning across various NLP tasks.

- ML Engineers: Offers a customizable, modular framework for building, training, and automating LLM pipelines for production applications (e.g., chatbots, summarization tools, RAG systems, autonomous agents).

- Developers: Provides an easy-to-use, PyTorch-inspired library offering full control over prompt templates, model selection, output parsing, robust optimization, and training capabilities.

- Organizations: Enables teams to streamline LLM workflows with a powerful, token-efficient solution scalable from research to production.

Core Functionality and Architecture:

AdalFlow is a "PyTorch Library for Building and Auto-Optimizing Any LLM Task Pipeline." This lightweight, modular library simplifies the development and optimization of LLM task pipelines. Its design philosophy, inspired by PyTorch, prioritizes minimal abstraction while maximizing flexibility. It supports a wide range of tasks, from Generative AI (chatbots, translation, summarization, code generation) to classical NLP tasks (text classification, named entity recognition).

Central to AdalFlow are two key components:

-

Component: For defining pipelines. -

DataClass: For managing data interactions with LLMs.

This architecture provides developers with complete control over prompt templates, model selection, and output parsing. AdalFlow also incorporates a unified framework for auto-optimization, enabling token-efficient and high-performing prompt optimization. The AdalComponent and Trainer facilitate the creation of trainable task pipelines supporting custom training and validation steps, optimizers, evaluators, and loss functions.

Design Principles:

- Simplicity: AdalFlow keeps abstraction layers to a minimum (maximum three) for clarity and reduced code complexity.

- Quality: Prioritizes high-quality core components over a large number of integrations.

- Optimization: Emphasizes pipeline optimization through robust logging, observability, and configurable tools.

Why Choose AdalFlow?

- PyTorch-Inspired: Powerful, lightweight, modular, and robust.

- Model-Agnostic: Supports various LLMs and applications (RAG, agents, classical NLP).

- User-Friendly: Achieves high performance even with basic prompting.

- Unified Optimization: Supports zero-shot and few-shot prompt optimization.

- State-of-the-Art: Utilizes advanced techniques like Text-Grad and DsPy.

- High Accuracy: Employs innovations such as Text-Grad 2.0 and Learn-to-Reason Few-shot In-Context Learning.

(The remainder of the document detailing workflows, code examples, installation, and FAQs would follow here, maintaining the same level of rephrasing and restructuring as above.)

The above is the detailed content of Optimizing LLM Tasks with AdalFlow. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Remember the flood of open-source Chinese models that disrupted the GenAI industry earlier this year? While DeepSeek took most of the headlines, Kimi K1.5 was one of the prominent names in the list. And the model was quite cool.

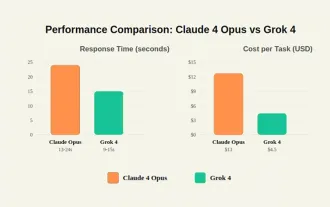

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

By mid-2025, the AI “arms race” is heating up, and xAI and Anthropic have both released their flagship models, Grok 4 and Claude 4. These two models are at opposite ends of the design philosophy and deployment platform, yet they

10 Amazing Humanoid Robots Already Walking Among Us Today

Jul 16, 2025 am 11:12 AM

10 Amazing Humanoid Robots Already Walking Among Us Today

Jul 16, 2025 am 11:12 AM

But we probably won’t have to wait even 10 years to see one. In fact, what could be considered the first wave of truly useful, human-like machines is already here. Recent years have seen a number of prototypes and production models stepping out of t

Context Engineering is the 'New' Prompt Engineering

Jul 12, 2025 am 09:33 AM

Context Engineering is the 'New' Prompt Engineering

Jul 12, 2025 am 09:33 AM

Until the previous year, prompt engineering was regarded a crucial skill for interacting with large language models (LLMs). Recently, however, LLMs have significantly advanced in their reasoning and comprehension abilities. Naturally, our expectation

What Are The 7 Types Of AI Agents?

Jul 11, 2025 am 11:08 AM

What Are The 7 Types Of AI Agents?

Jul 11, 2025 am 11:08 AM

Picture something sophisticated, such as an AI engine ready to give detailed feedback on a new clothing collection from Milan, or automatic market analysis for a business operating worldwide, or intelligent systems managing a large vehicle fleet.The

Concealed Command Crisis: Researchers Game AI To Get Published

Jul 13, 2025 am 11:08 AM

Concealed Command Crisis: Researchers Game AI To Get Published

Jul 13, 2025 am 11:08 AM

Scientists have uncovered a clever yet alarming method to bypass the system. July 2025 marked the discovery of an elaborate strategy where researchers inserted invisible instructions into their academic submissions — these covert directives were tail

United Nations Considering These Four Crucial Actions To Save The World From Dire AGI And Killer AI Superintelligence

Jul 13, 2025 am 11:09 AM

United Nations Considering These Four Crucial Actions To Save The World From Dire AGI And Killer AI Superintelligence

Jul 13, 2025 am 11:09 AM

Be aware that the United Nations has had an ongoing interest in how AI is advancing and what kinds of international multilateral arrangements and collaborations ought to be taking place (see my coverage at the link here). The distinctive element of t

Grok 4 is Here and it's Simply Brilliant! - Analytics Vidhya

Jul 12, 2025 am 09:14 AM

Grok 4 is Here and it's Simply Brilliant! - Analytics Vidhya

Jul 12, 2025 am 09:14 AM

“It’s smarter than almost all graduate students in all disciplines – Elon Musk.” Elon Musk and his Grok team are back with their latest and best model to date: Grok 4. It was only 3 months ago that this team of e