Zero-shot Image Classification with OpenAI's CLIP VIT-L14

Apr 11, 2025 am 10:04 AMOpenAI's CLIP (Contrastive Language–Image Pre-training) model, specifically the CLIP ViT-L14 variant, represents a significant advancement in multimodal learning and natural language processing. This powerful computer vision system excels at representing both images and text as vectors, enabling innovative applications.

Key Capabilities of CLIP ViT-L14

CLIP ViT-L14's strength lies in its ability to perform zero-shot image classification and identify image-text similarities. This makes it highly versatile for tasks such as image clustering and image retrieval. Its effectiveness stems from its architecture and training methodology, making it a valuable tool in various multimodal machine learning projects.

Understanding the Model

-

Architecture: The model employs a Vision Transformer (ViT) architecture as its image encoder and a masked self-attention transformer for text encoding. This allows for efficient comparison of image and text embeddings using contrastive loss.

-

Process: Both images and text are converted into vector representations. The model's pre-training allows it to predict the pairings of images and their corresponding text descriptions, leveraging a vast dataset of image-caption pairs.

CLIP's Distinguishing Features

CLIP's efficiency stems from its ability to learn from diverse, noisy data, enabling strong zero-shot transfer learning. The choice of ViT architecture over ResNet contributes to its computational efficiency. Its flexibility arises from leveraging natural language supervision, surpassing the limitations of datasets like ImageNet. This allows for high zero-shot performance across various tasks, including object classification, OCR, and geo-localization.

Performance and Benchmarks

CLIP ViT-L14 demonstrates superior accuracy compared to other CLIP models, particularly in generalizing to unseen image classification tasks. It achieves approximately 75% accuracy on ImageNet, outperforming models like CLIP ViT-B32 and CLIP ViT-B16.

Practical Implementation

Using CLIP ViT-L14 involves leveraging pre-trained weights and a suitable processor. The following steps illustrate a basic implementation:

-

Import Libraries: Import necessary libraries like

PIL,requests,transformers. -

Load Pre-trained Model: Load the pre-trained

openai/clip-vit-large-patch14model and processor. -

Image Processing: Load an image (e.g., from a URL) using

PILandrequests. -

Inference: Use the processor to prepare image and text inputs for the model. Perform inference to obtain image-text similarity scores.

Limitations

While powerful, CLIP ViT-L14 has limitations. It can struggle with fine-grained classification and tasks requiring precise object counting. The following examples illustrate these challenges:

Applications

CLIP's versatility extends to various applications:

- Image Search: Enhanced image retrieval based on text descriptions.

- Image Captioning: Generating descriptive captions for images.

- Zero-Shot Classification: Classifying images without needing labeled training data for specific classes.

Conclusion

CLIP ViT-L14 showcases the potential of multimodal models in computer vision. Its efficiency, zero-shot capabilities, and wide range of applications make it a valuable tool. However, awareness of its limitations is crucial for effective implementation.

(Resources, FAQs, and key takeaways remain largely unchanged from the original text, maintaining the integrity of the information while rephrasing for improved flow and conciseness.)

The above is the detailed content of Zero-shot Image Classification with OpenAI's CLIP VIT-L14. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

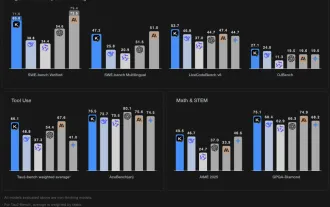

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Remember the flood of open-source Chinese models that disrupted the GenAI industry earlier this year? While DeepSeek took most of the headlines, Kimi K1.5 was one of the prominent names in the list. And the model was quite cool.

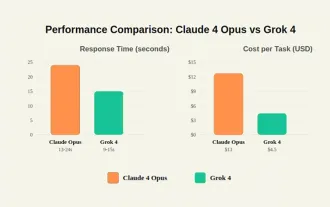

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

By mid-2025, the AI “arms race” is heating up, and xAI and Anthropic have both released their flagship models, Grok 4 and Claude 4. These two models are at opposite ends of the design philosophy and deployment platform, yet they

10 Amazing Humanoid Robots Already Walking Among Us Today

Jul 16, 2025 am 11:12 AM

10 Amazing Humanoid Robots Already Walking Among Us Today

Jul 16, 2025 am 11:12 AM

But we probably won’t have to wait even 10 years to see one. In fact, what could be considered the first wave of truly useful, human-like machines is already here. Recent years have seen a number of prototypes and production models stepping out of t

Context Engineering is the 'New' Prompt Engineering

Jul 12, 2025 am 09:33 AM

Context Engineering is the 'New' Prompt Engineering

Jul 12, 2025 am 09:33 AM

Until the previous year, prompt engineering was regarded a crucial skill for interacting with large language models (LLMs). Recently, however, LLMs have significantly advanced in their reasoning and comprehension abilities. Naturally, our expectation

Leia's Immersity Mobile App Brings 3D Depth To Everyday Photos

Jul 09, 2025 am 11:17 AM

Leia's Immersity Mobile App Brings 3D Depth To Everyday Photos

Jul 09, 2025 am 11:17 AM

Built on Leia’s proprietary Neural Depth Engine, the app processes still images and adds natural depth along with simulated motion—such as pans, zooms, and parallax effects—to create short video reels that give the impression of stepping into the sce

What Are The 7 Types Of AI Agents?

Jul 11, 2025 am 11:08 AM

What Are The 7 Types Of AI Agents?

Jul 11, 2025 am 11:08 AM

Picture something sophisticated, such as an AI engine ready to give detailed feedback on a new clothing collection from Milan, or automatic market analysis for a business operating worldwide, or intelligent systems managing a large vehicle fleet.The

These AI Models Didn't Learn Language, They Learned Strategy

Jul 09, 2025 am 11:16 AM

These AI Models Didn't Learn Language, They Learned Strategy

Jul 09, 2025 am 11:16 AM

A new study from researchers at King’s College London and the University of Oxford shares results of what happened when OpenAI, Google and Anthropic were thrown together in a cutthroat competition based on the iterated prisoner's dilemma. This was no

Concealed Command Crisis: Researchers Game AI To Get Published

Jul 13, 2025 am 11:08 AM

Concealed Command Crisis: Researchers Game AI To Get Published

Jul 13, 2025 am 11:08 AM

Scientists have uncovered a clever yet alarming method to bypass the system. July 2025 marked the discovery of an elaborate strategy where researchers inserted invisible instructions into their academic submissions — these covert directives were tail